What are live captions and their critical role in improving today's digital space

Captions have become more important than ever, not just for accessibility in an increasingly digital world, but for productivity and quality of life, too. In this article, we'll take a look at what is live captioning, how it works, and why more and more people are using it.

Published on: December 11, 2023

Here's a fact that won't raise any eyebrows – the first modern subtitled films were created by a deaf actor/producer for accessibility. Fast-forward 75 years, and, today, 85% of Netflix viewers in the UK use closed captions for diverse reasons ranging from better comprehension to improved concentration.

Captioning has become ubiquitous and you'll encounter it in most places with audiovisual content – videos, presentations, virtual meetings, and elsewhere. This is made possible by two factors. Firstly, significant advances in speech-to-text technology have made it all but trivial to generate and integrate captions into media. Secondly, as evidenced by the Netflix statistic above, there's demand.

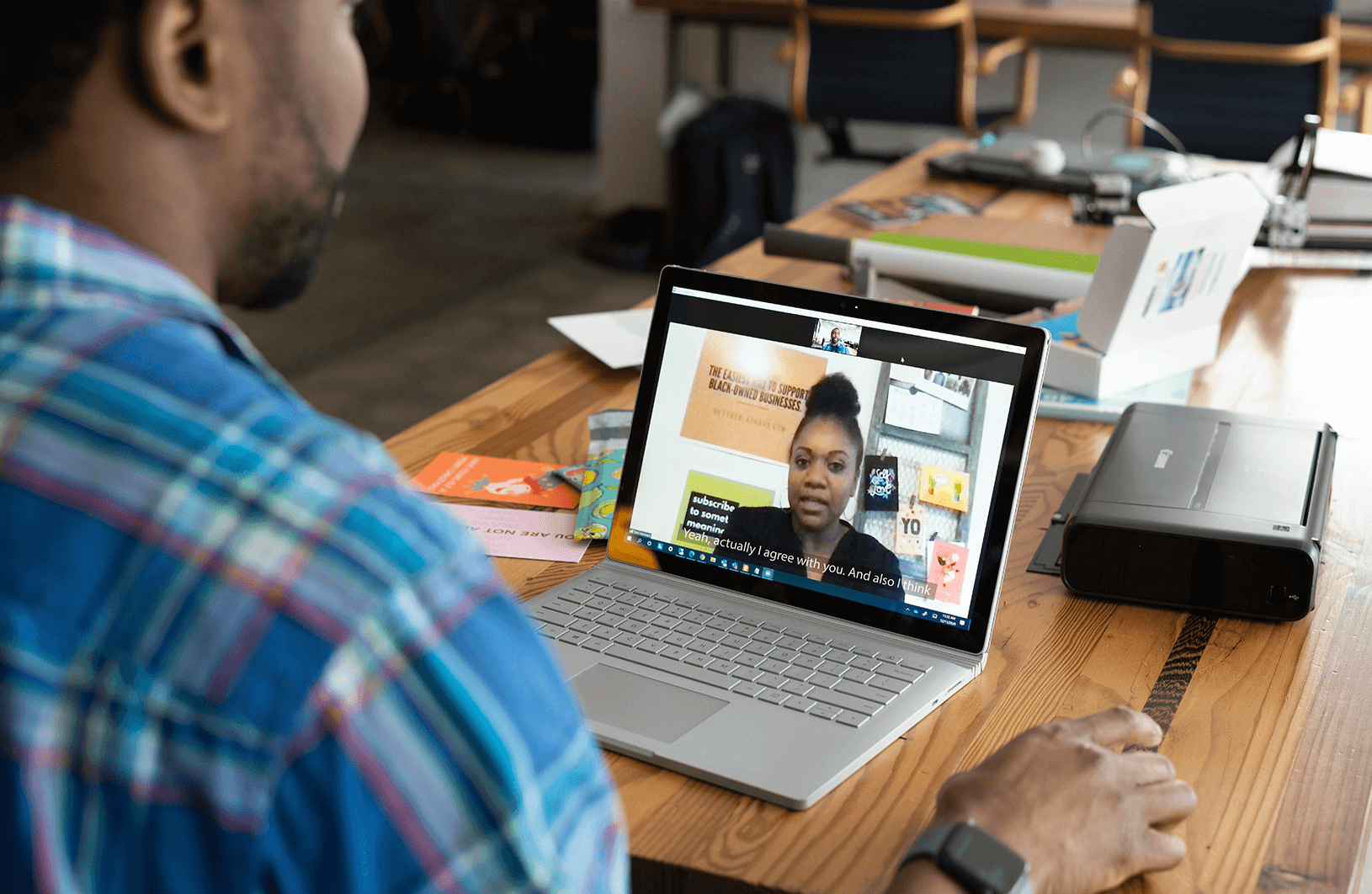

Captions have become more important than ever, not just for accessibility in an increasingly digital world, but for productivity and quality of life, too. Live captions enable hard-of-hearing students to participate in hybrid classes. Live transcriptions help miss less information in meetings. And subtitles help you get more out of your favorite TV show.

Live captions are particularly interesting, as it's the latest frontier in the evolution of text-to-speech, and while subtitles may be ubiquitous, live captions are only now starting to see widespread adoption in educational institutions and corporate workplaces.

In this article, we'll take a look at what is live captioning, how it works, and why more and more people are using it.

What is live captioning and how does it differ from other speech-to-text processes?

Live captioning, also sometimes referred to as real-time captioning or live transcription, is a real-time transcription of spoken content with the text directly displayed to viewers or participants, enabling them to read and understand what is being said in the moment.

Live captioning is typically used in lectures, meetings, and conferences, where, thanks to the transcription of spoken content to text, remote participants benefit from improved accessibility and information absorption. For instance, individuals with hearing impairments may be able to participate in meetings, or students who learn better through reading may perform better in class.

There are two key factors that set live captioning apart from other speech-to-text processes.

One is the real-time element of it. For instance, subtitles are typically generated and polished before being integrated into media, whereas live captions are a direct and raw transcription of spoken content as it happens.

The second is the instant display of captions. This contrasts with general transcription tools, which, while functionally very similar, will typically provide the written content after the fact and in a separate file.

Speech-to-text advances have spawned a plethora of tools and it may be easy to get mixed up in all the different things they do, so here's a quick breakdown for clarity's sake:

- Transcriptions - These tools convert spoken language into written text, making it easier to create written records of spoken content, such as interviews or lectures.

- Automatic translations - Unlike live captions, automatic translation tools instantly convert spoken content into multiple languages, facilitating cross-lingual communication.

- Automatic note-taking - These tools generate summarized notes from spoken discussions, aiding in meeting documentation and facilitating better information retention.

- Meeting note summaries - Meeting note summaries provide a condensed version of lengthy meetings, enhancing efficiency by offering a concise overview of the key points discussed.

Each of these processes serves distinct purposes, with live captions standing out for its real-time, on-the-fly transcription and immediate display, making it a vital tool for accessibility and comprehension. That said, it's not uncommon for modern captioning tools to provide multiple functionalities, for example, by offering both live captioning, as well as transcriptions after the event. How live captioning works and the importance of crystal clear audio input

Live captioning operates by using speech recognition software, which listens to spoken content and converts it into written text in real-time. This text is then synchronized with the audio and displayed on the screen – all within a fraction of a second.

Recent advances in artificial intelligence (AI) have also improved the quality of captions by correcting obvious mistakes, which used to be very common with live captions. You can still see these issues exemplified in YouTube's auto-generated captions that struggle with everything but the most perfect and crystal clear English.

Modern AI tools can enhance caption quality by understanding the context within which each word is spoken and helping the speech recognition software generate the most likely and accurate caption, rather than just simply transforming sound to text.

Crystal clear audio: the key to live captions

Still, even AI may struggle unless a key criterion is met, namely, crystal clear audio input. For the speech recognition software to do its job, it must be able to actually recognize speech, which it will struggle with if the audio is muffled, quiet, laggy, or otherwise unintelligible.

Accordingly, to make live captions work, it's critical to:

- - Use a high-quality audio capture device,

- - Ensure that the microphone is close to the source of sound, i.e., whoever's speaking,

- - Have little-to-no background noise or other noise pollution that may corrupt audio.

For these reasons, the Catchbox Plus system has become a go-to audio solution for universities and companies seeking to integrate live captioning into their day-to-day lives. It sports two wireless microphones – a compact and hands-free Clip microphone for the presenter and a throwable Cube microphone for the audience.

The dual-microphone setup is key for hybrid environments where there are both in-person and remote groups because it allows capturing high-quality audio from everyone without distractions.

Indeed, capturing crisp audio is typically a major challenge in large and open spaces, such as lecture halls and conference rooms. Common solutions, i.e. using ceiling array microphones, often produce a suboptimal result – the former picks up all the background noise, corrupting audio quality.

Passing the Cube around between audience members guarantees that the microphone avoids background noise and is always close to the source of the sound, while also getting there in the fastest possible way.

The positive impact of next-generation captioning technology

Live captions level the playing field in an increasingly digital world, as the use of live captions is, first and foremost, about accessibility. They break down communication barriers for individuals with hearing impairments and make it possible for everyone to fully participate in education, work, and live entertainment regardless of location or ability.

But the integration of captions goes beyond that, boosting not just accessibility, but inclusivity, too. By accommodating diverse learning styles, offering an additional avenue for information absorption, and generally improving the quality of our digital lives, live captions can have a profound impact on the way we connect, learn, and engage with content.

Captioning tech is also helping the workplace evolve. Note-taking and summary tools enhance the quality of company meetings and overall productivity, freeing up time for more value-added activities, while also reaping the rewards of a more inclusive and accessible workplace.

Toward a better future

More and more organizations are integrating live captions, but adoption is still far from widespread. At the moment, it's still a differentiator for companies and educational institutions – a way to stand out from the crowd, boost productivity, and offer a better digital experience over your competitors.

Still, with the ease of integration and the enormous value live captions bring, we believe that, in the coming years, live captions will be as ubiquitous as subtitles – to the benefit of absolutely everyone.

Recommended

Find out more

Auditory distractions in hybrid work

Noise distraction at work is not only frustrating, but it also has adverse effects on performance and productivity. Find more what measures can be taken to minimize noise distractions.

Catchbox for companies

Unleash your employees’ potential by fostering a culture built on collaboration. From all-hands meetings to employee onboarding - Catchbox mics ensure everyone can hear and be heard.